-

Be sure to read this post! Beware of scammers. https://www.indianagunowners.com/threads/classifieds-new-online-payment-guidelines-rules-paypal-venmo-zelle-etc.511734/

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

AI, Great Friend or Dangerous Foe?

- Thread starter Ingomike

- Start date

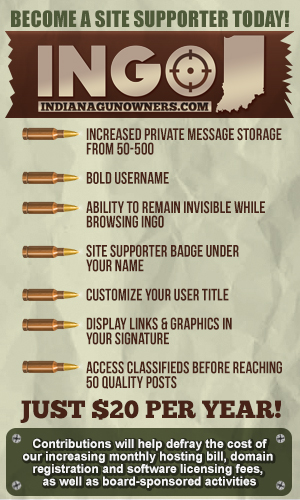

The #1 community for Gun Owners in Indiana

Member Benefits:

Fewer Ads! Discuss all aspects of firearm ownership Discuss anti-gun legislation Buy, sell, and trade in the classified section Chat with Local gun shops, ranges, trainers & other businesses Discover free outdoor shooting areas View up to date on firearm-related events Share photos & video with other members ...and so much more!

Member Benefits:

Unemployment in India is going to skyrocket.

I probably know ten call center manager types that live in $500k+ houses. They always thought computers would replace the low level workers, here they come for the affluent middle class…Unemployment in India is going to skyrocket.

Sure hope those folks saved up...hard times are around the corner!I probably know ten call center manager types that live in $500k+ houses. They always thought computers would replace the low level workers, here they come for the affluent middle class…

We just launched our Voice AI for insurance agents. Thing will make 100s of calls per minute. More if needed.Unemployment in India is going to skyrocket.

We pitch to agents as it's like having 60 American Call Center reps working for you. They're "on call" whenever you need them. Only pay when you use them.

2,000 Workers Gone: Klarna Is First Major Company To Unleash Mass Layoffs Thanks To AI | ZeroHedge

ZeroHedge - On a long enough timeline, the survival rate for everyone drops to zero

I am puking sick and tired of that answer. It fails to actually think this through to the logical conclusion. True AI does not need humans at all. A true AI would recognize, possibly, no need to produce tires, it has no need to go anywhere, only inefficient humans have a need to go somewhere, and they can be eliminated to eliminate that need. Why would AI need to grow crops? It doesn’t eat. True AI has no need for humans. We are building our doom as a species…If your position can be replaced by automation, AI, or foreign labor, you need to learn a new skill.

We can complain about it, but you're not putting this genie back in the bottle.

It’s not going to become sentient. Folks are watching too many science fiction movies.I am puking sick and tired of that answer. It fails to actually think this through to the logical conclusion. True AI does not need humans at all. A true AI would recognize, possibly, no need to produce tires, it has no need to go anywhere, only inefficient humans have a need to go somewhere, and they can be eliminated to eliminate that need. Why would AI need to grow crops? It doesn’t eat. True AI has no need for humans. We are building our doom as a species…

Its just another “assembly line” type of automation.

Is it going to put people out of work?

You better believe it.

And so did the internet. So did foreign call centers, for that matter.

The bottom line is it’s not going away.

Folks can stick their heads in the sand, waste half their days watching Netflix and their weekends pining over their favorite sports team, running after that dopamine hit OR they can spend their time wisely trying to adapt and learn new skills.

Speaking of Henry Ford inventions, you know what else he helped perpetuate?

The 40 hour work week.

He lowered workers hours to 5 day 8 hours a day. With the same pay.

You wanna know why?

Because to increase production worldwide, you need more consumers.

People consume more when they have more leisure time.

Why do you think kids are stuck in a classroom 5 days a week for 8 hours a day.

The system is conditioning folks to be consumers.

Consumers of entertainment, goods, activities, food etc.

Bread & circuses to the extreme.

Conditioned to put our trust in an employer, a stock market and hope time and inflation, which we have no control over, is on our side.

It’s not.

I’m not necessarily disagreeing with you on many of the possible negative repercussions.

But I don’t think AI is going to turn into Skynet either.

So… I’m personally going to use it to help solve problems in the marketplace. Help provide value to my clients. Save me time on tasks AI is efficient in.

If your position can be replaced by automation, AI, or foreign labor, you need to learn a new skill.

We can complain about it, but you're not putting this genie back in the bottle.

So my take on this thread personally is for more of an open philosophical discussion about the potential dangers of AI. Definitely an interesting blurb on the radar, as AI in and of itself is currently just a tool. However it's application and how it will effect humanity at large is another can o' worms. I've seen both sides of the argument, and have been a casual user of AI myself, however as someone who's been studying AI before it became part of the common lexicon, there are problems that no one is asking and nor do I think society is ready for.It’s not going to become sentient. Folks are watching too many science fiction movies.

Its just another “assembly line” type of automation.

Is it going to put people out of work?

You better believe it.

And so did the internet. So did foreign call centers, for that matter.

The bottom line is it’s not going away.

Folks can stick their heads in the sand, waste half their days watching Netflix and their weekends pining over their favorite sports team, running after that dopamine hit OR they can spend their time wisely trying to adapt and learn new skills.

Speaking of Henry Ford inventions, you know what else he helped perpetuate?

The 40 hour work week.

He lowered workers hours to 5 day 8 hours a day. With the same pay.

You wanna know why?

Because to increase production worldwide, you need more consumers.

People consume more when they have more leisure time.

Why do you think kids are stuck in a classroom 5 days a week for 8 hours a day.

The system is conditioning folks to be consumers.

Consumers of entertainment, goods, activities, food etc.

Bread & circuses to the extreme.

Conditioned to put our trust in an employer, a stock market and hope time and inflation, which we have no control over, is on our side.

It’s not.

I’m not necessarily disagreeing with you on many of the possible negative repercussions.

But I don’t think AI is going to turn into Skynet either.

So… I’m personally going to use it to help solve problems in the marketplace. Help provide value to my clients. Save me time on tasks AI is efficient in.

The first thing is the concept of progress and efficiency. We live in a society that strives for further and further efficiency and progress, however the question I ask is "what is the limit? At what cost?" You are more than correct that the genie is definitely out of the bottle, and that people have historically adapted to these increases of efficiency in order to stay relevant. However, what if there is an upper limit to this? What if there is a scenario where we cannot adapt to the change?

Let me put it in different terms. AI is shaping up to be capable of not only doing white collar jobs, it will eventually be capable of doing EVERY job. Blue collar work may resist this longer simply due to limitations in physical infrastructure, but eventually those jobs will be lost. At that point, what happens. I've heard some futurists predict this is where we'll be able to stop working and live with our free time. Ignoring the potential tyrannical and political ramifications of that, what if man wasn't meant to not work? How would humanity change without the necessity to work. I personally don't believe it would be the utopia some would have us believe.

I don't think it would be too far fetched that in the future at some point progress actually regresses simply due to a previous system actually being better, or maybe knowing how to use technology in parallel. A good example would be optical discs vs. digital media. Digital media is vastly more efficient and convenient, however optical discs are vastly superior when it comes to decentralized archive storage. The best way is to use the advantages of both, using SSD's and the like for short term storage, and burning that data to an M-Disc for long term archiving would probably be best. Many futurists are advocating for the equivalent of eliminating all optical discs because it's less efficient, ignoring some of its advantages.

I'm not sure I trust the architects of this revolution either. After reading from some of the fathers of this revolution, specifically Steve Grand, I was shocked on how one-dimensional and how many assumptions made in hubris they make. Many of these architects see themselves as Dr. Frankenstein, trying to change the world with their own version of Promethean Fire. When the corporate world and governments caught wind of this, it was off to the races without the questions of "at what cost" and "should we really". Of course I'm lamenting now as man is not really good at asking ourselves those questions when there is something to prove or progress to be made.

Another thing is what happens when we completely base our lives and infrastructure on something that is very complicated and prone to failure.

Don't get me wrong, I am not saying you are wrong in embracing this. I guess my point is that I don't see this going straight down the path of adapting to the next order like in Star Trek The Next Generation or something. Ultimately I believe AI will do more harm than good, not at all because it will become "sentient". More because of the designers being evil and using the system for evil, as well as the problems that arise when relying on a very complex, orderly, and low entropic system in a very chaotic and high entropy universe.

As a side note, AI doesn't need to be sentient (I personally don't believe we as finite beings can create sentience) to appear sentient. AI doesn't need to appear sentient to still be dangerous. A nonsensical allegory, but what if Skynet launched the nukes because the programmers made it's prime directive "to serve man", but it made the mistake of "serving us for dinner" and launched the nukes to make sure we were properly cooked. It's silly, but anyone who has done programming will understand that one typo or one improper method will break or change the entire code.

When the queen ant no longer needs the worker ants they die, otherwise known as the great reset..It’s not going to become sentient. Folks are watching too many science fiction movies.

Its just another “assembly line” type of automation.

Is it going to put people out of work?

You better believe it.

And so did the internet. So did foreign call centers, for that matter.

The bottom line is it’s not going away.

Folks can stick their heads in the sand, waste half their days watching Netflix and their weekends pining over their favorite sports team, running after that dopamine hit OR they can spend their time wisely trying to adapt and learn new skills.

Speaking of Henry Ford inventions, you know what else he helped perpetuate?

The 40 hour work week.

He lowered workers hours to 5 day 8 hours a day. With the same pay.

You wanna know why?

Because to increase production worldwide, you need more consumers.

People consume more when they have more leisure time.

Why do you think kids are stuck in a classroom 5 days a week for 8 hours a day.

The system is conditioning folks to be consumers.

Consumers of entertainment, goods, activities, food etc.

Bread & circuses to the extreme.

Conditioned to put our trust in an employer, a stock market and hope time and inflation, which we have no control over, is on our side.

It’s not.

I’m not necessarily disagreeing with you on many of the possible negative repercussions.

But I don’t think AI is going to turn into Skynet either.

So… I’m personally going to use it to help solve problems in the marketplace. Help provide value to my clients. Save me time on tasks AI is efficient in.

Best post of the thread!So my take on this thread personally is for more of an open philosophical discussion about the potential dangers of AI. Definitely an interesting blurb on the radar, as AI in and of itself is currently just a tool. However it's application and how it will effect humanity at large is another can o' worms. I've seen both sides of the argument, and have been a casual user of AI myself, however as someone who's been studying AI before it became part of the common lexicon, there are problems that no one is asking and nor do I think society is ready for.

The first thing is the concept of progress and efficiency. We live in a society that strives for further and further efficiency and progress, however the question I ask is "what is the limit? At what cost?" You are more than correct that the genie is definitely out of the bottle, and that people have historically adapted to these increases of efficiency in order to stay relevant. However, what if there is an upper limit to this? What if there is a scenario where we cannot adapt to the change?

Let me put it in different terms. AI is shaping up to be capable of not only doing white collar jobs, it will eventually be capable of doing EVERY job. Blue collar work may resist this longer simply due to limitations in physical infrastructure, but eventually those jobs will be lost. At that point, what happens. I've heard some futurists predict this is where we'll be able to stop working and live with our free time. Ignoring the potential tyrannical and political ramifications of that, what if man wasn't meant to not work? How would humanity change without the necessity to work. I personally don't believe it would be the utopia some would have us believe.

I don't think it would be too far fetched that in the future at some point progress actually regresses simply due to a previous system actually being better, or maybe knowing how to use technology in parallel. A good example would be optical discs vs. digital media. Digital media is vastly more efficient and convenient, however optical discs are vastly superior when it comes to decentralized archive storage. The best way is to use the advantages of both, using SSD's and the like for short term storage, and burning that data to an M-Disc for long term archiving would probably be best. Many futurists are advocating for the equivalent of eliminating all optical discs because it's less efficient, ignoring some of its advantages.

I'm not sure I trust the architects of this revolution either. After reading from some of the fathers of this revolution, specifically Steve Grand, I was shocked on how one-dimensional and how many assumptions made in hubris they make. Many of these architects see themselves as Dr. Frankenstein, trying to change the world with their own version of Promethean Fire. When the corporate world and governments caught wind of this, it was off to the races without the questions of "at what cost" and "should we really". Of course I'm lamenting now as man is not really good at asking ourselves those questions when there is something to prove or progress to be made.

Another thing is what happens when we completely base our lives and infrastructure on something that is very complicated and prone to failure.

Don't get me wrong, I am not saying you are wrong in embracing this. I guess my point is that I don't see this going straight down the path of adapting to the next order like in Star Trek The Next Generation or something. Ultimately I believe AI will do more harm than good, not at all because it will become "sentient". More because of the designers being evil and using the system for evil, as well as the problems that arise when relying on a very complex, orderly, and low entropic system in a very chaotic and high entropy universe.

As a side note, AI doesn't need to be sentient (I personally don't believe we as finite beings can create sentience) to appear sentient. AI doesn't need to appear sentient to still be dangerous. A nonsensical allegory, but what if Skynet launched the nukes because the programmers made it's prime directive "to serve man", but it made the mistake of "serving us for dinner" and launched the nukes to make sure we were properly cooked. It's silly, but anyone who has done programming will understand that one typo or one improper method will break or change the entire code.

Another limit is the speed of change for a physical human being that likely is incapable of high speed evolution…The first thing is the concept of progress and efficiency. We live in a society that strives for further and further efficiency and progress, however the question I ask is "what is the limit? At what cost?" You are more than correct that the genie is definitely out of the bottle, and that people have historically adapted to these increases of efficiency in order to stay relevant. However, what if there is an upper limit to this? What if there is a scenario where we cannot adapt to the change?

AI will end gun crimes on Chicago's CTA!

www.nbcchicago.com

www.nbcchicago.com

CTA implementing AI technology to detect guns on property

Roughly one million passengers board CTA buses and trains every single day across the Chicago area, a massive undertaking for police to help keep riders safe.

www.nbcchicago.com

www.nbcchicago.com

Technological progress has always had some bad to accompany the good. Smart phones and video gains have already created a generation of mostly physically unfit young people to qualify for physically demanding jobs. In third world countries, where people walk wherever they need to go and have to exert energy to get simple necessities, like water, the population doesn't need to jog, take long walks, go to the gym, etc. for exercise. AI will go as far as society allows it. Of course, you'll have the criminal element that will misuse it. I don't think that we are conditioned to be consumers because of the workweek or school week. They coincide because the majority of parents work days, so the schools are open during those hours. It's too bad that those multi-million dollar monuments to bureaucracy (schools) sit idol most of the day aren't utilized to the fullest. It is easy, these days, to be a consumer 24/7. All one needs is a smart phone and a wifi connection.

AI and military leaders raise alarm bells on China’s growing dominance in AI - Washington Examiner

Mark Milley and former Google CEO Eric Schmidt have raised alarm bells that the U.S. military remains unprepared to deal with China's rise in AI.

snapping turtle

Grandmaster

" Skynet begins to learn rapidly and eventually becomes self-aware at 2:14 a.m., EDT, on August 29, 1997."

It's a slow learner being its 2024 going on 2025." Skynet begins to learn rapidly and eventually becomes self-aware at 2:14 a.m., EDT, on August 29, 1997."

Latest posts

-

WTS: S&W EQUALIZER 9MM 3.675'' 10/13/15-RD SEMI-AUTO PISTOL

- Latest: GarrisonsGuns

-

-

WTS/WTT: Rossi Poly Tuffy Survival - 410 BORE - 45 COLT

- Latest: GarrisonsGuns

-

Members online

- bulldogs42

- MarylandTacomaRider

- Mike227

- MCgrease08

- Microairman1

- irishhunter

- tommy-t

- Bleachey

- partyboy6686

- Eagle21

- Angrysauce

- jjlaughner

- crumbflicker

- ws6guy

- hoosierdaddy1976

- miguel

- Midwestjimbo

- bgcatty

- GarrisonsGuns

- adam

- thedreamer

- rhamersley

- x10

- AllenM

- JUMBO

- Keith_Indy

- TheGrumpyGuy

- bigdaddy1427

- deo62

- bking1340

- tackdriver

- two70

- daddyusmaximus

- brettholycross

- 85Cosmo

- three50seven

- natekup

- BScott

- flightsimmer

- Garandthumb

- Duroncrush

- Bulletb5

- MRP2003

- JimH

- VGFsirius

- TheSpookyCat

- CodeBlue

- snapping turtle

- Mikey1911

- wtburnette

Total: 7,734 (members: 294, guests: 7,440)